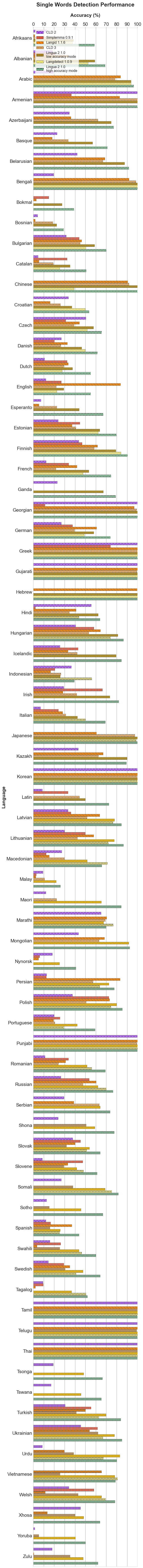

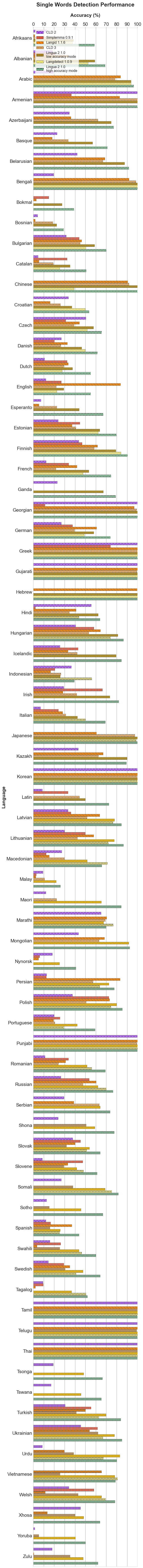

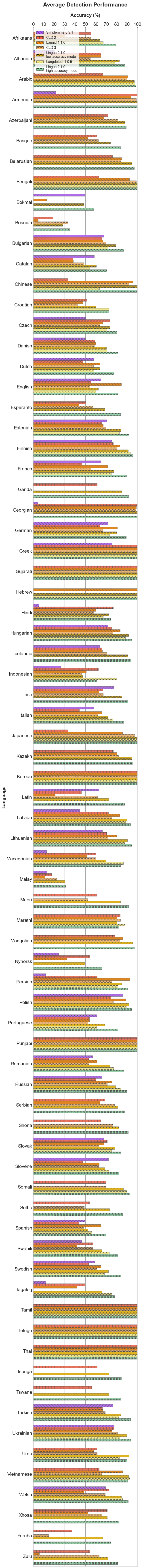

Bar plot

| Language | Average | Single Words | Word Pairs | Sentences | ||||||||||||||||||||||||||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| Lingua (high accuracy mode) |

Lingua (low accuracy mode) |

Langdetect | FastText | FastSpell (conservative mode) |

FastSpell (aggressive mode) |

Langid | CLD3 | CLD2 | Simplemma | Lingua (high accuracy mode) |

Lingua (low accuracy mode) |

Langdetect | FastText | FastSpell (conservative mode) |

FastSpell (aggressive mode) |

Langid | CLD3 | CLD2 | Simplemma | Lingua (high accuracy mode) |

Lingua (low accuracy mode) |

Langdetect | FastText | FastSpell (conservative mode) |

FastSpell (aggressive mode) |

Langid | CLD3 | CLD2 | Simplemma | Lingua (high accuracy mode) |

Lingua (low accuracy mode) |

Langdetect | FastText | FastSpell (conservative mode) |

FastSpell (aggressive mode) |

Langid | CLD3 | CLD2 | Simplemma | |

| Afrikaans |  79 79 |

64 64 |

67 67 |

36 36 |

70 70 |

73 73 |

30 30 |

55 55 |

55 55 |

- - |

58 58 |

38 38 |

37 37 |

11 11 |

49 49 |

50 50 |

1 1 |

22 22 |

13 13 |

- - |

81 81 |

62 62 |

66 66 |

23 23 |

67 67 |

74 74 |

10 10 |

46 46 |

56 56 |

- - |

97 97 |

93 93 |

98 98 |

74 74 |

94 94 |

95 95 |

80 80 |

98 98 |

96 96 |

- - |

| Albanian |  88 88 |

80 80 |

79 79 |

66 66 |

66 66 |

66 66 |

65 65 |

55 55 |

65 65 |

20 20 |

69 69 |

54 54 |

53 53 |

35 35 |

35 35 |

35 35 |

33 33 |

18 18 |

18 18 |

21 21 |

95 95 |

86 86 |

84 84 |

66 66 |

66 66 |

66 66 |

63 63 |

48 48 |

77 77 |

17 17 |

100 100 |

99 99 |

99 99 |

98 98 |

98 98 |

98 98 |

98 98 |

98 98 |

99 99 |

23 23 |

| Arabic |  98 98 |

94 94 |

97 97 |

96 96 |

96 96 |

96 96 |

91 91 |

90 90 |

67 67 |

- - |

96 96 |

88 88 |

94 94 |

89 89 |

89 89 |

89 89 |

84 84 |

79 79 |

19 19 |

- - |

99 99 |

96 96 |

98 98 |

98 98 |

98 98 |

98 98 |

90 90 |

92 92 |

82 82 |

- - |

100 100 |

99 99 |

100 100 |

100 100 |

100 100 |

100 100 |

98 98 |

100 100 |

99 99 |

- - |

| Armenian |  100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

94 94 |

99 99 |

100 100 |

22 22 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

83 83 |

100 100 |

100 100 |

36 36 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

100 100 |

14 14 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

97 97 |

100 100 |

14 14 |

| Azerbaijani |  90 90 |

82 82 |

- - |

78 78 |

69 69 |

85 85 |

68 68 |

81 81 |

72 72 |

- - |

77 77 |

71 71 |

- - |

57 57 |

43 43 |

67 67 |

36 36 |

62 62 |

34 34 |

- - |

92 92 |

78 78 |

- - |

80 80 |

69 69 |

90 90 |

69 69 |

82 82 |

82 82 |

- - |

99 99 |

96 96 |

- - |

98 98 |

94 94 |

100 100 |

98 98 |

99 99 |

99 99 |

- - |

| Basque |  84 84 |

75 75 |

- - |

71 71 |

71 71 |

71 71 |

52 52 |

62 62 |

61 61 |

- - |

71 71 |

56 56 |

- - |

44 44 |

44 44 |

44 44 |

18 18 |

33 33 |

23 23 |

- - |

87 87 |

76 76 |

- - |

70 70 |

70 70 |

70 70 |

52 52 |

62 62 |

69 69 |

- - |

93 93 |

92 92 |

- - |

100 100 |

100 100 |

100 100 |

86 86 |

92 92 |

91 91 |

- - |

| Belarusian |  97 97 |

92 92 |

- - |

85 85 |

92 92 |

95 95 |

85 85 |

84 84 |

76 76 |

- - |

92 92 |

80 80 |

- - |

69 69 |

81 81 |

87 87 |

69 69 |

67 67 |

42 42 |

- - |

99 99 |

95 95 |

- - |

88 88 |

94 94 |

98 98 |

87 87 |

86 86 |

87 87 |

- - |

100 100 |

100 100 |

- - |

98 98 |

99 99 |

100 100 |

99 99 |

100 100 |

99 99 |

- - |

| Bengali |  100 100 |

100 100 |

100 100 |

98 98 |

98 98 |

98 98 |

92 92 |

99 99 |

63 63 |

- - |

100 100 |

100 100 |

100 100 |

94 94 |

94 94 |

94 94 |

92 92 |

98 98 |

19 19 |

- - |

100 100 |

100 100 |

100 100 |

99 99 |

99 99 |

99 99 |

88 88 |

99 99 |

69 69 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

97 97 |

99 99 |

99 99 |

- - |

| Bokmal |  58 58 |

50 50 |

- - |

- - |

69 69 |

75 75 |

13 13 |

- - |

- - |

50 50 |

39 39 |

27 27 |

- - |

- - |

53 53 |

55 55 |

3 3 |

- - |

- - |

15 15 |

59 59 |

47 47 |

- - |

- - |

70 70 |

77 77 |

12 12 |

- - |

- - |

45 45 |

77 77 |

75 75 |

- - |

- - |

85 85 |

91 91 |

23 23 |

- - |

- - |

90 90 |

| Bosnian |  35 35 |

29 29 |

- - |

9 9 |

54 54 |

65 65 |

5 5 |

33 33 |

19 19 |

- - |

29 29 |

23 23 |

- - |

9 9 |

54 54 |

54 54 |

2 2 |

19 19 |

4 4 |

- - |

35 35 |

29 29 |

- - |

10 10 |

64 64 |

76 76 |

4 4 |

28 28 |

15 15 |

- - |

41 41 |

36 36 |

- - |

8 8 |

44 44 |

64 64 |

8 8 |

52 52 |

36 36 |

- - |

| Bulgarian |  87 87 |

78 78 |

72 72 |

78 78 |

89 89 |

92 92 |

67 67 |

70 70 |

66 66 |

68 68 |

70 70 |

56 56 |

51 51 |

56 56 |

80 80 |

83 83 |

46 46 |

45 45 |

32 32 |

44 44 |

91 91 |

81 81 |

68 68 |

81 81 |

88 88 |

95 95 |

62 62 |

66 66 |

72 72 |

67 67 |

99 99 |

96 96 |

96 96 |

99 99 |

98 98 |

99 99 |

93 93 |

98 98 |

93 93 |

91 91 |

| Catalan |  70 70 |

58 58 |

54 54 |

57 57 |

63 63 |

66 66 |

38 38 |

48 48 |

38 38 |

59 59 |

51 51 |

33 33 |

25 25 |

33 33 |

42 42 |

44 44 |

5 5 |

19 19 |

4 4 |

32 32 |

74 74 |

60 60 |

51 51 |

57 57 |

63 63 |

67 67 |

29 29 |

42 42 |

30 30 |

62 62 |

87 87 |

82 82 |

86 86 |

83 83 |

85 85 |

88 88 |

81 81 |

84 84 |

79 79 |

81 81 |

| Chinese |  100 100 |

100 100 |

64 64 |

71 71 |

71 71 |

71 71 |

96 96 |

92 92 |

33 33 |

- - |

100 100 |

100 100 |

39 39 |

46 46 |

46 46 |

46 46 |

90 90 |

92 92 |

- - |

- - |

100 100 |

100 100 |

56 56 |

68 68 |

68 68 |

68 68 |

97 97 |

83 83 |

2 2 |

- - |

100 100 |

100 100 |

97 97 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

98 98 |

- - |

| Croatian |  73 73 |

60 60 |

73 73 |

47 47 |

72 72 |

81 81 |

48 48 |

42 42 |

51 51 |

- - |

53 53 |

36 36 |

49 49 |

28 28 |

62 62 |

64 64 |

16 16 |

26 26 |

34 34 |

- - |

74 74 |

57 57 |

72 72 |

42 42 |

79 79 |

87 87 |

38 38 |

42 42 |

47 47 |

- - |

90 90 |

86 86 |

97 97 |

72 72 |

76 76 |

93 93 |

90 90 |

58 58 |

73 73 |

- - |

| Czech |  80 80 |

71 71 |

71 71 |

76 76 |

76 76 |

80 80 |

66 66 |

64 64 |

74 74 |

50 50 |

66 66 |

54 54 |

52 52 |

58 58 |

61 61 |

64 64 |

44 44 |

39 39 |

50 50 |

31 31 |

84 84 |

72 72 |

73 73 |

79 79 |

78 78 |

83 83 |

69 69 |

65 65 |

80 80 |

44 44 |

91 91 |

87 87 |

88 88 |

92 92 |

88 88 |

92 92 |

86 86 |

88 88 |

91 91 |

76 76 |

| Danish |  81 81 |

70 70 |

70 70 |

62 62 |

76 76 |

78 78 |

60 60 |

58 58 |

59 59 |

50 50 |

61 61 |

45 45 |

50 50 |

35 35 |

56 56 |

58 58 |

33 33 |

26 26 |

27 27 |

20 20 |

84 84 |

70 70 |

68 68 |

57 57 |

75 75 |

78 78 |

61 61 |

54 54 |

56 56 |

47 47 |

98 98 |

95 95 |

93 93 |

95 95 |

98 98 |

99 99 |

86 86 |

95 95 |

94 94 |

83 83 |

| Dutch |  77 77 |

64 64 |

58 58 |

78 78 |

71 71 |

78 78 |

64 64 |

58 58 |

47 47 |

58 58 |

55 55 |

36 36 |

27 27 |

55 55 |

46 46 |

55 55 |

34 34 |

29 29 |

11 11 |

32 32 |

81 81 |

61 61 |

49 49 |

81 81 |

70 70 |

81 81 |

61 61 |

47 47 |

42 42 |

50 50 |

96 96 |

94 94 |

98 98 |

100 100 |

97 97 |

99 99 |

98 98 |

97 97 |

90 90 |

92 92 |

| English |  81 81 |

63 63 |

60 60 |

96 96 |

96 96 |

96 96 |

85 85 |

54 54 |

56 56 |

65 65 |

55 55 |

29 29 |

22 22 |

90 90 |

90 90 |

90 90 |

84 84 |

22 22 |

12 12 |

27 27 |

89 89 |

62 62 |

58 58 |

98 98 |

98 98 |

98 98 |

71 71 |

44 44 |

55 55 |

69 69 |

99 99 |

97 97 |

99 99 |

100 100 |

100 100 |

100 100 |

99 99 |

97 97 |

100 100 |

98 98 |

| Esperanto |  84 84 |

66 66 |

- - |

76 76 |

76 76 |

76 76 |

44 44 |

57 57 |

50 50 |

- - |

67 67 |

44 44 |

- - |

51 51 |

51 51 |

51 51 |

5 5 |

22 22 |

7 7 |

- - |

85 85 |

61 61 |

- - |

79 79 |

79 79 |

79 79 |

30 30 |

51 51 |

46 46 |

- - |

98 98 |

93 93 |

- - |

100 100 |

100 100 |

100 100 |

96 96 |

98 98 |

98 98 |

- - |

| Estonian |  92 92 |

83 83 |

83 83 |

73 73 |

73 73 |

73 73 |

67 67 |

70 70 |

65 65 |

71 71 |

80 80 |

62 62 |

62 62 |

50 50 |

50 50 |

50 50 |

37 37 |

41 41 |

24 24 |

44 44 |

96 96 |

88 88 |

87 87 |

73 73 |

73 73 |

73 73 |

67 67 |

69 69 |

73 73 |

70 70 |

100 100 |

99 99 |

100 100 |

96 96 |

97 97 |

97 97 |

98 98 |

99 99 |

99 99 |

97 97 |

| Finnish |  96 96 |

91 91 |

93 93 |

92 92 |

93 93 |

93 93 |

83 83 |

80 80 |

77 77 |

76 76 |

90 90 |

77 77 |

84 84 |

82 82 |

82 82 |

82 82 |

62 62 |

58 58 |

44 44 |

47 47 |

98 98 |

95 95 |

95 95 |

96 96 |

96 96 |

96 96 |

88 88 |

84 84 |

89 89 |

81 81 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

98 98 |

100 100 |

| French |  89 89 |

77 77 |

75 75 |

83 83 |

83 83 |

83 83 |

71 71 |

55 55 |

46 46 |

65 65 |

74 74 |

52 52 |

48 48 |

62 62 |

62 62 |

62 62 |

42 42 |

22 22 |

12 12 |

34 34 |

94 94 |

83 83 |

78 78 |

86 86 |

86 86 |

86 86 |

74 74 |

49 49 |

48 48 |

68 68 |

99 99 |

98 98 |

99 99 |

99 99 |

99 99 |

99 99 |

98 98 |

94 94 |

80 80 |

94 94 |

| Ganda |  91 91 |

84 84 |

- - |

- - |

- - |

- - |

- - |

- - |

61 61 |

- - |

79 79 |

65 65 |

- - |

- - |

- - |

- - |

- - |

- - |

23 23 |

- - |

95 95 |

87 87 |

- - |

- - |

- - |

- - |

- - |

- - |

62 62 |

- - |

100 100 |

100 100 |

- - |

- - |

- - |

- - |

- - |

- - |

99 99 |

- - |

| Georgian |  100 100 |

100 100 |

- - |

99 99 |

99 99 |

99 99 |

99 99 |

98 98 |

100 100 |

4 4 |

100 100 |

100 100 |

- - |

97 97 |

97 97 |

97 97 |

97 97 |

99 99 |

100 100 |

11 11 |

100 100 |

100 100 |

- - |

99 99 |

99 99 |

99 99 |

100 100 |

100 100 |

100 100 |

2 2 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

96 96 |

100 100 |

0 0 |

| German |  89 89 |

80 80 |

73 73 |

89 89 |

89 89 |

89 89 |

81 81 |

66 66 |

64 64 |

72 72 |

74 74 |

57 57 |

49 49 |

76 76 |

76 76 |

76 76 |

61 61 |

40 40 |

27 27 |

38 38 |

94 94 |

84 84 |

70 70 |

93 93 |

93 93 |

93 93 |

81 81 |

62 62 |

66 66 |

78 78 |

100 100 |

99 99 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

98 98 |

98 98 |

99 99 |

| Greek |  100 100 |

100 100 |

100 100 |

99 99 |

99 99 |

99 99 |

100 100 |

100 100 |

100 100 |

75 75 |

100 100 |

100 100 |

100 100 |

98 98 |

98 98 |

98 98 |

100 100 |

100 100 |

100 100 |

74 74 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

60 60 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

92 92 |

| Gujarati |  100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

99 99 |

99 99 |

99 99 |

100 100 |

99 99 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

| Hebrew |  100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

- - |

- - |

100 100 |

100 100 |

100 100 |

99 99 |

99 99 |

99 99 |

100 100 |

- - |

- - |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

- - |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

- - |

- - |

| Hindi |  73 73 |

33 33 |

68 68 |

87 87 |

72 72 |

88 88 |

60 60 |

58 58 |

77 77 |

5 5 |

61 61 |

11 11 |

44 44 |

74 74 |

53 53 |

77 77 |

41 41 |

34 34 |

56 56 |

2 2 |

64 64 |

20 20 |

60 60 |

88 88 |

65 65 |

89 89 |

47 47 |

45 45 |

76 76 |

4 4 |

94 94 |

67 67 |

99 99 |

99 99 |

96 96 |

99 99 |

92 92 |

95 95 |

99 99 |

11 11 |

| Hungarian |  95 95 |

90 90 |

88 88 |

92 92 |

92 92 |

92 92 |

83 83 |

76 76 |

75 75 |

72 72 |

87 87 |

77 77 |

73 73 |

80 80 |

80 80 |

80 80 |

64 64 |

53 53 |

41 41 |

58 58 |

98 98 |

94 94 |

91 91 |

96 96 |

96 96 |

96 96 |

86 86 |

76 76 |

85 85 |

62 62 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

95 95 |

| Icelandic |  93 93 |

88 88 |

- - |

65 65 |

70 70 |

71 71 |

66 66 |

71 71 |

66 66 |

64 64 |

83 83 |

72 72 |

- - |

39 39 |

49 49 |

50 50 |

33 33 |

42 42 |

26 26 |

43 43 |

97 97 |

92 92 |

- - |

57 57 |

64 64 |

65 65 |

66 66 |

70 70 |

73 73 |

59 59 |

100 100 |

99 99 |

- - |

98 98 |

99 99 |

99 99 |

99 99 |

99 99 |

99 99 |

90 90 |

| Indonesian |  61 61 |

47 47 |

80 80 |

69 69 |

68 68 |

77 77 |

51 51 |

46 46 |

62 62 |

26 26 |

39 39 |

25 25 |

56 56 |

43 43 |

52 52 |

56 56 |

16 16 |

26 26 |

36 36 |

20 20 |

61 61 |

46 46 |

84 84 |

68 68 |

73 73 |

82 82 |

54 54 |

45 45 |

63 63 |

26 26 |

83 83 |

71 71 |

100 100 |

95 95 |

78 78 |

93 93 |

82 82 |

66 66 |

88 88 |

32 32 |

| Irish |  91 91 |

85 85 |

- - |

60 60 |

66 66 |

69 69 |

63 63 |

67 67 |

66 66 |

77 77 |

82 82 |

70 70 |

- - |

35 35 |

41 41 |

47 47 |

28 28 |

42 42 |

29 29 |

66 66 |

94 94 |

90 90 |

- - |

57 57 |

66 66 |

68 68 |

64 64 |

66 66 |

78 78 |

76 76 |

96 96 |

95 95 |

- - |

89 89 |

93 93 |

93 93 |

97 97 |

94 94 |

92 92 |

90 90 |

| Italian |  87 87 |

71 71 |

77 77 |

89 89 |

89 89 |

89 89 |

66 66 |

62 62 |

44 44 |

58 58 |

69 69 |

42 42 |

50 50 |

74 74 |

74 74 |

74 74 |

28 28 |

31 31 |

7 7 |

24 24 |

92 92 |

74 74 |

81 81 |

92 92 |

92 92 |

92 92 |

70 70 |

57 57 |

32 32 |

57 57 |

100 100 |

98 98 |

99 99 |

100 100 |

100 100 |

100 100 |

100 100 |

98 98 |

93 93 |

94 94 |

| Japanese |  100 100 |

100 100 |

100 100 |

87 87 |

87 87 |

87 87 |

86 86 |

98 98 |

33 33 |

- - |

100 100 |

100 100 |

99 99 |

72 72 |

72 72 |

72 72 |

61 61 |

97 97 |

- - |

- - |

100 100 |

100 100 |

100 100 |

89 89 |

89 89 |

89 89 |

96 96 |

96 96 |

- - |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

| Kazakh |  96 96 |

94 94 |

- - |

88 88 |

76 76 |

91 91 |

80 80 |

82 82 |

77 77 |

- - |

89 89 |

88 88 |

- - |

72 72 |

52 52 |

79 79 |

67 67 |

62 62 |

43 43 |

- - |

98 98 |

94 94 |

- - |

90 90 |

80 80 |

94 94 |

78 78 |

83 83 |

88 88 |

- - |

100 100 |

100 100 |

- - |

100 100 |

96 96 |

100 100 |

96 96 |

99 99 |

99 99 |

- - |

| Korean |  100 100 |

100 100 |

100 100 |

99 99 |

99 99 |

99 99 |

100 100 |

99 99 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

98 98 |

98 98 |

98 98 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

98 98 |

100 100 |

- - |

| Latin |  87 87 |

73 73 |

- - |

50 50 |

50 50 |

50 50 |

21 21 |

62 62 |

46 46 |

63 63 |

72 72 |

49 49 |

- - |

24 24 |

24 24 |

24 24 |

- - |

44 44 |

9 9 |

33 33 |

93 93 |

76 76 |

- - |

41 41 |

41 41 |

41 41 |

2 2 |

58 58 |

42 42 |

63 63 |

97 97 |

94 94 |

- - |

85 85 |

86 86 |

86 86 |

61 61 |

83 83 |

88 88 |

93 93 |

| Latvian |  93 93 |

87 87 |

89 89 |

82 82 |

82 82 |

84 84 |

83 83 |

75 75 |

72 72 |

45 45 |

85 85 |

75 75 |

76 76 |

65 65 |

66 66 |

69 69 |

64 64 |

51 51 |

33 33 |

36 36 |

97 97 |

90 90 |

92 92 |

83 83 |

84 84 |

86 86 |

86 86 |

77 77 |

84 84 |

33 33 |

99 99 |

97 97 |

99 99 |

97 97 |

97 97 |

98 98 |

98 98 |

98 98 |

98 98 |

65 65 |

| Lithuanian |  95 95 |

87 87 |

87 87 |

81 81 |

81 81 |

81 81 |

80 80 |

72 72 |

70 70 |

66 66 |

86 86 |

76 76 |

71 71 |

61 61 |

61 61 |

61 61 |

58 58 |

42 42 |

30 30 |

50 50 |

98 98 |

89 89 |

91 91 |

83 83 |

83 83 |

83 83 |

85 85 |

75 75 |

82 82 |

62 62 |

100 100 |

98 98 |

100 100 |

99 99 |

99 99 |

99 99 |

99 99 |

99 99 |

99 99 |

88 88 |

| Macedonian |  84 84 |

72 72 |

86 86 |

74 74 |

86 86 |

93 93 |

51 51 |

60 60 |

60 60 |

13 13 |

66 66 |

52 52 |

71 71 |

51 51 |

77 77 |

83 83 |

15 15 |

30 30 |

27 27 |

12 12 |

86 86 |

70 70 |

88 88 |

72 72 |

83 83 |

96 96 |

44 44 |

54 54 |

70 70 |

11 11 |

99 99 |

95 95 |

100 100 |

100 100 |

97 97 |

99 99 |

94 94 |

97 97 |

84 84 |

15 15 |

| Malay |  31 31 |

31 31 |

- - |

15 15 |

39 39 |

52 52 |

11 11 |

22 22 |

18 18 |

13 13 |

26 26 |

22 22 |

- - |

14 14 |

36 36 |

38 38 |

2 2 |

11 11 |

9 9 |

3 3 |

38 38 |

36 36 |

- - |

19 19 |

52 52 |

64 64 |

9 9 |

22 22 |

22 22 |

10 10 |

28 28 |

35 35 |

- - |

12 12 |

29 29 |

54 54 |

22 22 |

34 34 |

23 23 |

26 26 |

| Maori |  92 92 |

83 83 |

- - |

- - |

- - |

- - |

- - |

52 52 |

61 61 |

- - |

84 84 |

64 64 |

- - |

- - |

- - |

- - |

- - |

22 22 |

12 12 |

- - |

92 92 |

88 88 |

- - |

- - |

- - |

- - |

- - |

43 43 |

72 72 |

- - |

99 99 |

98 98 |

- - |

- - |

- - |

- - |

- - |

91 91 |

98 98 |

- - |

| Marathi |  85 85 |

39 39 |

88 88 |

80 80 |

8 8 |

75 75 |

80 80 |

84 84 |

83 83 |

- - |

74 74 |

16 16 |

77 77 |

61 61 |

9 9 |

61 61 |

70 70 |

69 69 |

65 65 |

- - |

85 85 |

30 30 |

89 89 |

81 81 |

15 15 |

69 69 |

79 79 |

84 84 |

86 86 |

- - |

96 96 |

72 72 |

98 98 |

99 99 |

1 1 |

95 95 |

91 91 |

98 98 |

99 99 |

- - |

| Mongolian |  97 97 |

95 95 |

- - |

81 81 |

85 85 |

89 89 |

86 86 |

83 83 |

78 78 |

- - |

92 92 |

88 88 |

- - |

59 59 |

66 66 |

72 72 |

68 68 |

63 63 |

43 43 |

- - |

99 99 |

98 98 |

- - |

86 86 |

91 91 |

94 94 |

90 90 |

87 87 |

92 92 |

- - |

99 99 |

99 99 |

- - |

98 98 |

99 99 |

100 100 |

99 99 |

99 99 |

100 100 |

- - |

| Nynorsk |  66 66 |

52 52 |

- - |

29 29 |

63 63 |

70 70 |

32 32 |

- - |

54 54 |

24 24 |

41 41 |

25 25 |

- - |

8 8 |

42 42 |

43 43 |

5 5 |

- - |

18 18 |

6 6 |

66 66 |

49 49 |

- - |

18 18 |

58 58 |

70 70 |

16 16 |

- - |

50 50 |

22 22 |

91 91 |

81 81 |

- - |

61 61 |

87 87 |

96 96 |

75 75 |

- - |

93 93 |

45 45 |

| Persian |  90 90 |

80 80 |

81 81 |

90 90 |

79 79 |

92 92 |

92 92 |

76 76 |

61 61 |

12 12 |

78 78 |

62 62 |

64 64 |

79 79 |

57 57 |

84 84 |

83 83 |

57 57 |

13 13 |

12 12 |

94 94 |

80 80 |

80 80 |

92 92 |

81 81 |

94 94 |

94 94 |

70 70 |

72 72 |

5 5 |

100 100 |

98 98 |

100 100 |

100 100 |

98 98 |

99 99 |

100 100 |

99 99 |

99 99 |

18 18 |

| Polish |  95 95 |

90 90 |

89 89 |

92 92 |

92 92 |

92 92 |

89 89 |

77 77 |

75 75 |

86 86 |

85 85 |

77 77 |

74 74 |

80 80 |

80 80 |

80 80 |

73 73 |

51 51 |

38 38 |

72 72 |

98 98 |

93 93 |

93 93 |

97 97 |

97 97 |

97 97 |

93 93 |

80 80 |

87 87 |

87 87 |

100 100 |

99 99 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

99 99 |

99 99 |

| Portuguese |  81 81 |

69 69 |

60 60 |

73 73 |

81 81 |

84 84 |

54 54 |

53 53 |

54 54 |

61 61 |

59 59 |

42 42 |

29 29 |

47 47 |

66 66 |

67 67 |

19 19 |

21 21 |

20 20 |

26 26 |

85 85 |

70 70 |

54 54 |

71 71 |

81 81 |

85 85 |

44 44 |

40 40 |

48 48 |

60 60 |

99 99 |

95 95 |

98 98 |

99 99 |

96 96 |

99 99 |

98 98 |

97 97 |

94 94 |

97 97 |

| Punjabi |  100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

99 99 |

99 99 |

99 99 |

100 100 |

99 99 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

| Romanian |  87 87 |

72 72 |

77 77 |

64 64 |

64 64 |

64 64 |

61 61 |

53 53 |

54 54 |

57 57 |

69 69 |

49 49 |

56 56 |

38 38 |

38 38 |

38 38 |

31 31 |

24 24 |

11 11 |

34 34 |

92 92 |

74 74 |

79 79 |

60 60 |

60 60 |

60 60 |

60 60 |

48 48 |

53 53 |

51 51 |

99 99 |

94 94 |

97 97 |

95 95 |

95 95 |

95 95 |

92 92 |

88 88 |

96 96 |

86 86 |

| Russian |  90 90 |

78 78 |

84 84 |

94 94 |

94 94 |

97 97 |

75 75 |

71 71 |

60 60 |

66 66 |

76 76 |

59 59 |

70 70 |

86 86 |

88 88 |

92 92 |

60 60 |

48 48 |

26 26 |

54 54 |

95 95 |

84 84 |

87 87 |

98 98 |

97 97 |

99 99 |

75 75 |

72 72 |

68 68 |

62 62 |

98 98 |

92 92 |

96 96 |

100 100 |

98 98 |

99 99 |

91 91 |

93 93 |

87 87 |

83 83 |

| Serbian |  88 88 |

78 78 |

- - |

76 76 |

53 53 |

76 76 |

64 64 |

78 78 |

69 69 |

- - |

74 74 |

62 62 |

- - |

54 54 |

47 47 |

54 54 |

39 39 |

63 63 |

29 29 |

- - |

90 90 |

80 80 |

- - |

76 76 |

58 58 |

76 76 |

63 63 |

75 75 |

78 78 |

- - |

99 99 |

92 92 |

- - |

98 98 |

52 52 |

98 98 |

89 89 |

95 95 |

99 99 |

- - |

| Shona |  91 91 |

81 81 |

- - |

- - |

- - |

- - |

- - |

76 76 |

65 65 |

- - |

78 78 |

56 56 |

- - |

- - |

- - |

- - |

- - |

51 51 |

24 24 |

- - |

96 96 |

86 86 |

- - |

- - |

- - |

- - |

- - |

79 79 |

71 71 |

- - |

100 100 |

100 100 |

- - |

- - |

- - |

- - |

- - |

99 99 |

99 99 |

- - |

| Slovak |  84 84 |

75 75 |

74 74 |

65 65 |

80 80 |

83 83 |

68 68 |

63 63 |

71 71 |

68 68 |

64 64 |

49 49 |

50 50 |

41 41 |

63 63 |

64 64 |

40 40 |

32 32 |

38 38 |

45 45 |

90 90 |

78 78 |

75 75 |

62 62 |

81 81 |

86 86 |

66 66 |

61 61 |

76 76 |

66 66 |

99 99 |

97 97 |

98 98 |

91 91 |

97 97 |

98 98 |

97 97 |

96 96 |

99 99 |

93 93 |

| Slovene |  82 82 |

67 67 |

73 73 |

59 59 |

75 75 |

77 77 |

63 63 |

63 63 |

48 48 |

72 72 |

61 61 |

39 39 |

48 48 |

32 32 |

56 56 |

57 57 |

33 33 |

29 29 |

8 8 |

48 48 |

87 87 |

68 68 |

72 72 |

54 54 |

74 74 |

78 78 |

61 61 |

60 60 |

42 42 |

72 72 |

99 99 |

93 93 |

98 98 |

90 90 |

96 96 |

97 97 |

95 95 |

99 99 |

92 92 |

96 96 |

| Somali |  92 92 |

85 85 |

90 90 |

24 24 |

51 51 |

52 52 |

- - |

69 69 |

70 70 |

- - |

82 82 |

64 64 |

76 76 |

4 4 |

18 18 |

20 20 |

- - |

38 38 |

27 27 |

- - |

96 96 |

90 90 |

95 95 |

15 15 |

46 46 |

48 48 |

- - |

70 70 |

83 83 |

- - |

100 100 |

100 100 |

100 100 |

52 52 |

89 89 |

89 89 |

- - |

100 100 |

99 99 |

- - |

| Sotho |  86 86 |

72 72 |

- - |

- - |

- - |

- - |

- - |

49 49 |

54 54 |

- - |

67 67 |

43 43 |

- - |

- - |

- - |

- - |

- - |

15 15 |

13 13 |

- - |

90 90 |

75 75 |

- - |

- - |

- - |

- - |

- - |

33 33 |

54 54 |

- - |

100 100 |

97 97 |

- - |

- - |

- - |

- - |

- - |

98 98 |

95 95 |

- - |

| Spanish |  70 70 |

56 56 |

56 56 |

74 74 |

64 64 |

73 73 |

65 65 |

48 48 |

43 43 |

50 50 |

44 44 |

26 26 |

25 25 |

51 51 |

48 48 |

52 52 |

37 37 |

16 16 |

12 12 |

16 16 |

69 69 |

49 49 |

46 46 |

72 72 |

60 60 |

74 74 |

59 59 |

32 32 |

34 34 |

41 41 |

97 97 |

94 94 |

98 98 |

100 100 |

85 85 |

94 94 |

98 98 |

96 96 |

85 85 |

92 92 |

| Swahili |  81 81 |

70 70 |

73 73 |

41 41 |

41 41 |

41 41 |

42 42 |

57 57 |

57 57 |

46 46 |

60 60 |

43 43 |

47 47 |

7 7 |

7 7 |

7 7 |

3 3 |

25 25 |

16 16 |

26 26 |

84 84 |

68 68 |

74 74 |

24 24 |

24 24 |

24 24 |

24 24 |

49 49 |

59 59 |

41 41 |

98 98 |

97 97 |

99 99 |

92 92 |

92 92 |

92 92 |

98 98 |

98 98 |

97 97 |

72 72 |

| Swedish |  84 84 |

72 72 |

68 68 |

76 76 |

79 79 |

81 81 |

65 65 |

61 61 |

53 53 |

59 59 |

64 64 |

46 46 |

40 40 |

51 51 |

57 57 |

59 59 |

35 35 |

30 30 |

14 14 |

29 29 |

88 88 |

76 76 |

67 67 |

78 78 |

82 82 |

85 85 |

63 63 |

56 56 |

52 52 |

62 62 |

99 99 |

94 94 |

96 96 |

98 98 |

98 98 |

99 99 |

96 96 |

96 96 |

93 93 |

87 87 |

| Tagalog |  78 78 |

66 66 |

76 76 |

45 45 |

46 46 |

46 46 |

42 42 |

- - |

50 50 |

12 12 |

52 52 |

36 36 |

51 51 |

11 11 |

11 11 |

11 11 |

2 2 |

- - |

9 9 |

9 9 |

83 83 |

67 67 |

78 78 |

28 28 |

28 28 |

28 28 |

26 26 |

- - |

44 44 |

11 11 |

98 98 |

96 96 |

99 99 |

98 98 |

98 98 |

98 98 |

98 98 |

- - |

95 95 |

15 15 |

| Tamil |  100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

- - |

| Telugu |  100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

- - |

| Thai |  100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

- - |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

98 98 |

100 100 |

- - |

| Tsonga |  84 84 |

72 72 |

- - |

- - |

- - |

- - |

- - |

- - |

61 61 |

- - |

66 66 |

46 46 |

- - |

- - |

- - |

- - |

- - |

- - |

19 19 |

- - |

89 89 |

73 73 |

- - |

- - |

- - |

- - |

- - |

- - |

68 68 |

- - |

98 98 |

97 97 |

- - |

- - |

- - |

- - |

- - |

- - |

97 97 |

- - |

| Tswana |  84 84 |

71 71 |

- - |

- - |

- - |

- - |

- - |

- - |

56 56 |

- - |

65 65 |

44 44 |

- - |

- - |

- - |

- - |

- - |

- - |

17 17 |

- - |

88 88 |

73 73 |

- - |

- - |

- - |

- - |

- - |

- - |

57 57 |

- - |

99 99 |

96 96 |

- - |

- - |

- - |

- - |

- - |

- - |

94 94 |

- - |

| Turkish |  94 94 |

87 87 |

82 82 |

86 86 |

86 86 |

86 86 |

67 67 |

69 69 |

66 66 |

76 76 |

84 84 |

71 71 |

63 63 |

70 70 |

70 70 |

70 70 |

50 50 |

41 41 |

30 30 |

55 55 |

98 98 |

91 91 |

84 84 |

88 88 |

88 88 |

88 88 |

67 67 |

70 70 |

71 71 |

78 78 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

84 84 |

97 97 |

97 97 |

96 96 |

| Ukrainian |  92 92 |

86 86 |

83 83 |

91 91 |

95 95 |

98 98 |

76 76 |

81 81 |

77 77 |

78 78 |

84 84 |

75 75 |

66 66 |

78 78 |

90 90 |

94 94 |

54 54 |

62 62 |

46 46 |

62 62 |

97 97 |

92 92 |

84 84 |

94 94 |

95 95 |

98 98 |

77 77 |

83 83 |

88 88 |

75 75 |

95 95 |

93 93 |

98 98 |

100 100 |

100 100 |

100 100 |

96 96 |

98 98 |

99 99 |

97 97 |

| Urdu |  90 90 |

79 79 |

83 83 |

63 63 |

75 75 |

80 80 |

58 58 |

61 61 |

61 61 |

- - |

80 80 |

65 65 |

67 67 |

40 40 |

59 59 |

68 68 |

30 30 |

39 39 |

8 8 |

- - |

94 94 |

78 78 |

83 83 |

50 50 |

68 68 |

74 74 |

46 46 |

53 53 |

75 75 |

- - |

96 96 |

94 94 |

97 97 |

99 99 |

99 99 |

99 99 |

99 99 |

92 92 |

99 99 |

- - |

| Vietnamese |  91 91 |

87 87 |

93 93 |

89 89 |

89 89 |

89 89 |

86 86 |

66 66 |

63 63 |

- - |

79 79 |

76 76 |

81 81 |

71 71 |

71 71 |

71 71 |

65 65 |

26 26 |

- - |

- - |

94 94 |

87 87 |

98 98 |

97 97 |

97 97 |

97 97 |

93 93 |

74 74 |

90 90 |

- - |

99 99 |

98 98 |

100 100 |

100 100 |

100 100 |

100 100 |

100 100 |

99 99 |

100 100 |

- - |

| Welsh |  91 91 |

82 82 |

85 85 |

64 64 |

69 69 |

72 72 |

49 49 |

69 69 |

72 72 |

69 69 |

78 78 |

61 61 |

69 69 |

35 35 |

41 41 |

46 46 |

11 11 |

43 43 |

34 34 |

58 58 |

96 96 |

87 87 |

88 88 |

61 61 |

71 71 |

74 74 |

39 39 |

66 66 |

85 85 |

60 60 |

99 99 |

99 99 |

99 99 |

96 96 |

96 96 |

97 97 |

95 95 |

98 98 |

98 98 |

90 90 |

| Xhosa |  82 82 |

69 69 |

- - |

- - |

- - |

- - |

53 53 |

66 66 |

71 71 |

- - |

64 64 |

45 45 |

- - |

- - |

- - |

- - |

13 13 |

40 40 |

45 45 |

- - |

85 85 |

67 67 |

- - |

- - |

- - |

- - |

49 49 |

65 65 |

71 71 |

- - |

98 98 |

94 94 |

- - |

- - |

- - |

- - |

96 96 |

92 92 |

97 97 |

- - |

| Yoruba |  74 74 |

62 62 |

- - |

8 8 |

8 8 |

8 8 |

- - |

15 15 |

37 37 |

- - |

50 50 |

33 33 |

- - |

1 1 |

1 1 |

1 1 |

- - |

5 5 |

1 1 |

- - |

77 77 |

61 61 |

- - |

1 1 |

1 1 |

1 1 |

- - |

11 11 |

22 22 |

- - |

96 96 |

92 92 |

- - |

21 21 |

22 22 |

22 22 |

- - |

28 28 |

88 88 |

- - |

| Zulu |  81 81 |

70 70 |

- - |

- - |

- - |

- - |

6 6 |

63 63 |

54 54 |

- - |

62 62 |

45 45 |

- - |

- - |

- - |

- - |

0 0 |

35 35 |

18 18 |

- - |

83 83 |

72 72 |

- - |

- - |

- - |

- - |

6 6 |

63 63 |

51 51 |

- - |

97 97 |

94 94 |

- - |

- - |

- - |

- - |

11 11 |

92 92 |

93 93 |

- - |

| Mean |  86 86 |

78 78 |

82 82 |

74 74 |

77 77 |

81 81 |

68 68 |

69 69 |

65 65 |

52 52 |

74 74 |

61 61 |

65 65 |

58 58 |

62 62 |

66 66 |

48 48 |

48 48 |

34 34 |

34 34 |

89 89 |

78 78 |

82 82 |

74 74 |

77 77 |

82 82 |

65 65 |

67 67 |

68 68 |

50 50 |

96 96 |

93 93 |

98 98 |

92 92 |

91 91 |

96 96 |

90 90 |

93 93 |

94 94 |

73 73 |

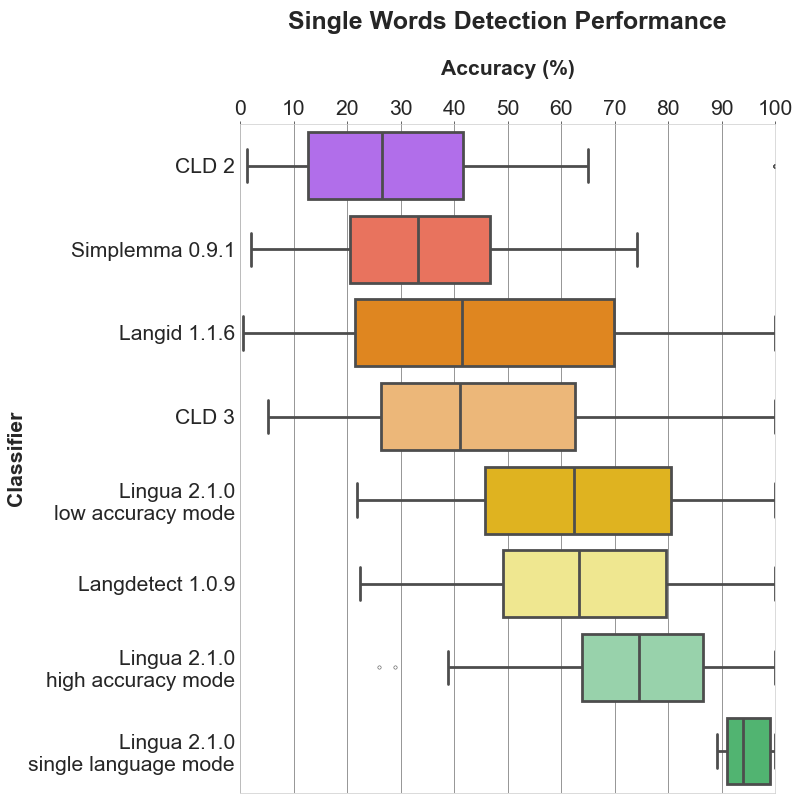

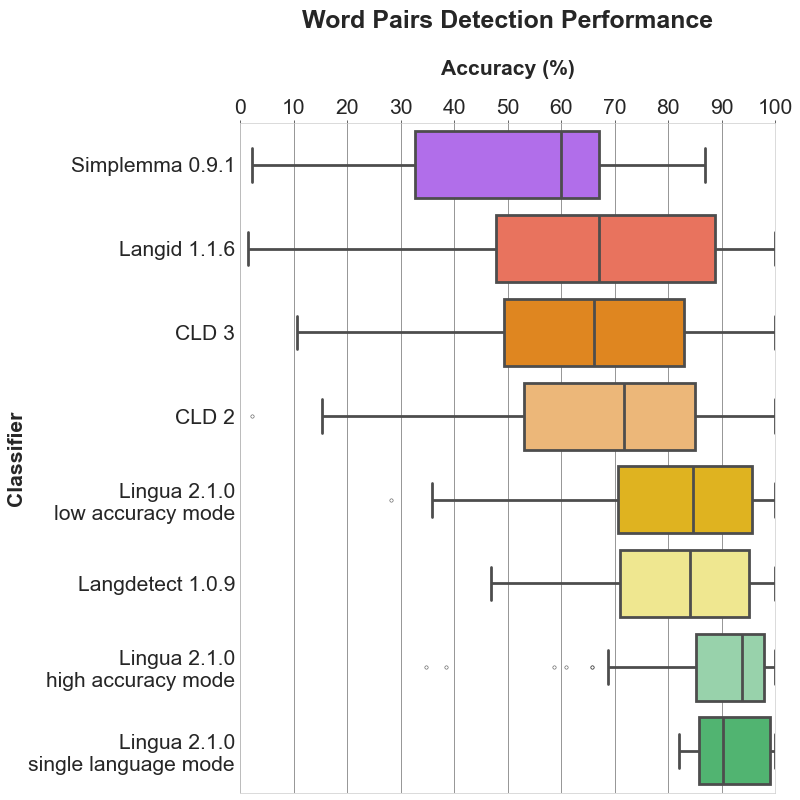

| Median | 89.0 | 79.0 | 82.5 | 78.0 | 79.0 | 83.0 | 67.0 | 68.0 | 63.0 | 59.0 | 74.0 | 57.0 | 63.5 | 57.5 | 61.0 | 67.0 | 41.5 | 41.0 | 26.5 | 33.0 | 94.0 | 81.0 | 84.0 | 81.0 | 81.0 | 86.0 | 67.0 | 66.0 | 71.5 | 60.0 | 99.0 | 97.0 | 99.0 | 99.0 | 98.0 | 99.0 | 98.0 | 98.0 | 98.0 | 90.0 |

| Standard Deviation | 13.12 | 17.34 | 13.43 | 23.07 | 19.9 | 17.0 | 24.61 | 19.04 | 18.57 | 23.46 | 18.48 | 25.01 | 23.72 | 28.52 | 25.31 | 24.22 | 32.33 | 27.86 | 28.74 | 18.94 | 13.14 | 18.95 | 15.64 | 26.45 | 21.67 | 19.67 | 28.5 | 21.83 | 22.7 | 24.48 | 11.05 | 11.91 | 2.78 | 19.46 | 19.1 | 11.78 | 20.21 | 13.95 | 12.25 | 31.91 |